What does Fusion actually do?

A common problem with model testing is that people do it badly. They ask one question in one app, rewrite it slightly in another, forget what the first answer looked like, then declare a winner based on vibes. That falls apart fast when you are trying to choose a model for recurring work like writing memos, comparing plans, or checking which assistant handles your normal prompt style best. Fusion is aimed at that very ordinary but expensive mess. The newsletter says the value plainly: instead of opening five apps and guessing, you can compare outputs side by side and build a quick cheat sheet for work.

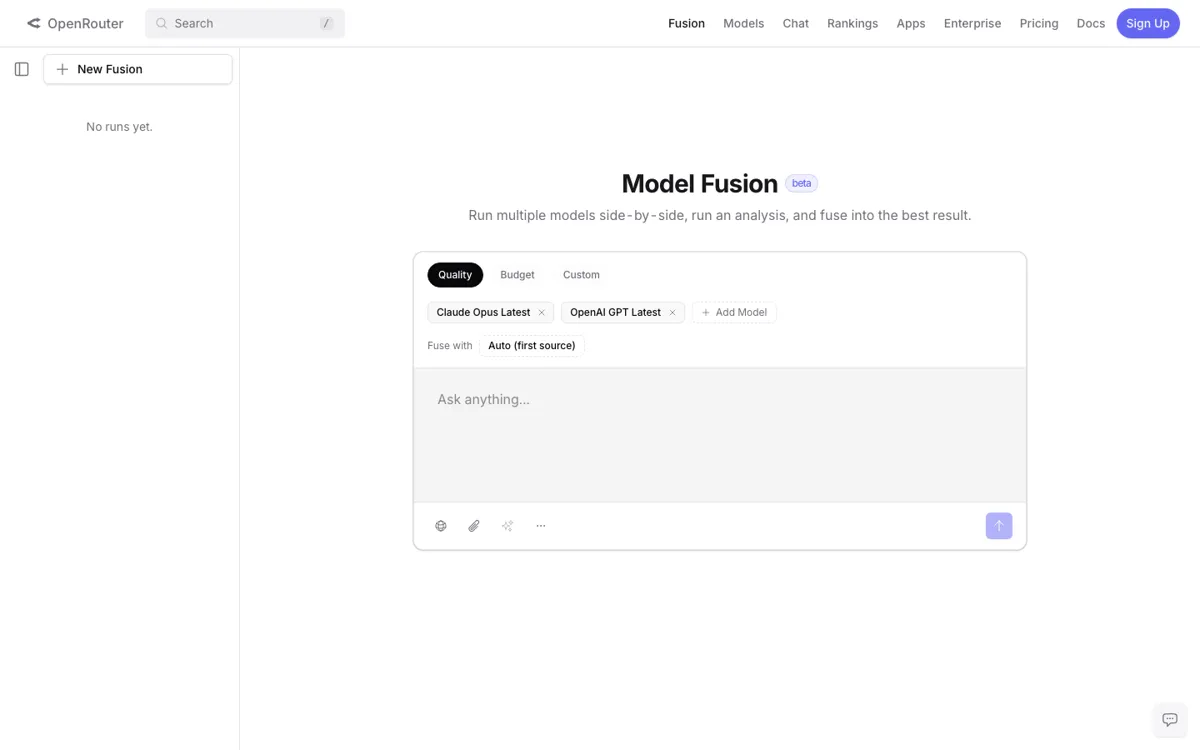

What makes Fusion useful is not raw model access by itself, because OpenRouter already gives access to many models elsewhere. The useful part is the dedicated comparison surface. The captured Fusion page confirms the product frame with a simple workspace for starting new comparison runs, while the newsletter fills in the action: create an account, choose whether to pay with OpenRouter credits or your own API keys, pick the models you want to compare, keep the prompt identical, and then inspect the side by side results. That is a much cleaner way to decide which model is strongest for a real prompt than manually hopping between separate apps.