What does OpenHuman actually do?

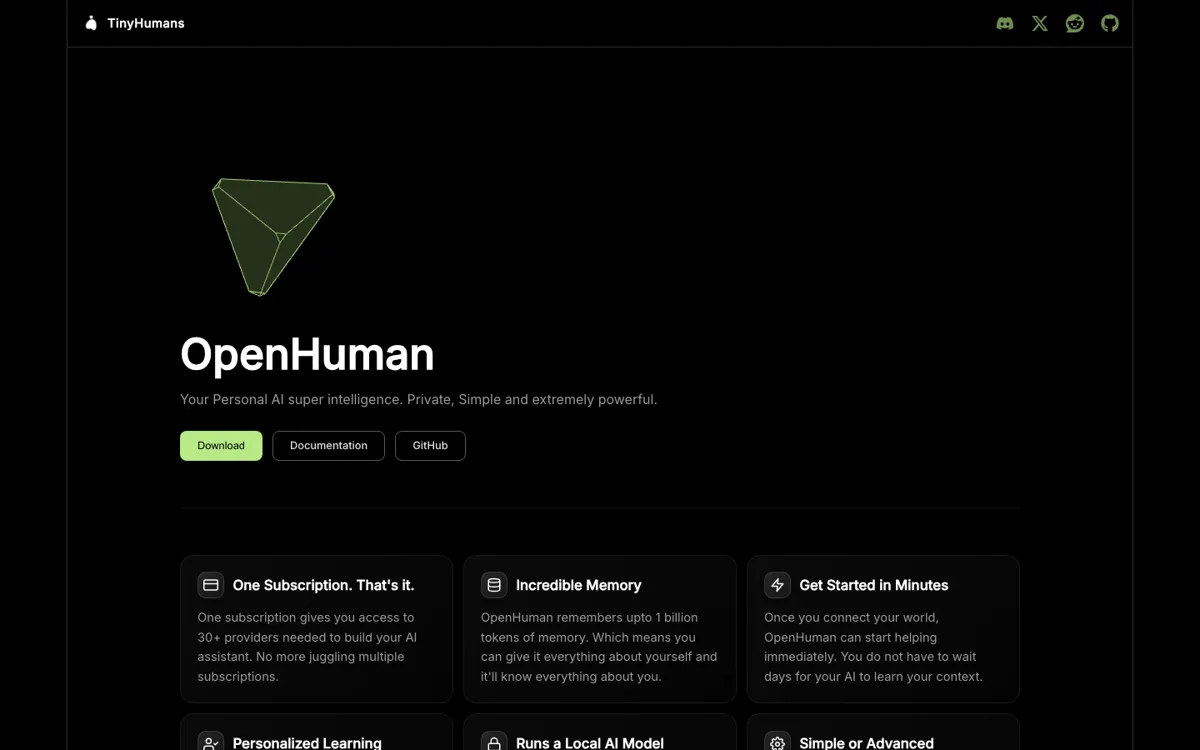

Most personal AI assistants still break at the same point: they sound helpful in a demo, then forget your world the moment the current thread ends. You answer one question, close the window, and tomorrow you are pasting the same project links, email context, meeting notes, and half-finished thoughts all over again. OpenHuman is aimed directly at that failure mode. Its docs frame the product as a desktop assistant that keeps syncing connected sources like Gmail, Slack, GitHub, Notion, and your own notes into a local memory tree, so the assistant has a running picture of your work instead of a one-shot prompt snapshot.

The product's strongest idea is not just that it stores memory, but that the memory is inspectable. OpenHuman says it writes that context into a local SQLite memory tree and mirrors the same reasoning chunks into Markdown files inside an Obsidian-style vault. You can open the vault yourself, inspect the files, and treat the memory as something visible instead of mystical. The docs also describe optional local AI through Ollama, one-click integrations for many services, and desktop-first install paths for Mac and Windows. Put together, that turns OpenHuman into more of a personal context layer plus assistant than a plain chat interface.