What does Needle actually do?

Needle caught fire because the core pitch is easy to understand and unusually sharp. Instead of promising that a new model is a bit better at everything, it focuses on one behavior that matters a lot for agent systems: tool calling. Then it compresses that story even further by attaching it to a tiny 26M model. That combination gives developers a very specific reason to pay attention. The question is not whether the model is a universal assistant. The question is whether useful tool-use behavior can survive aggressive size reduction well enough to change how agent stacks are built. That is the part people were reacting to on HN.

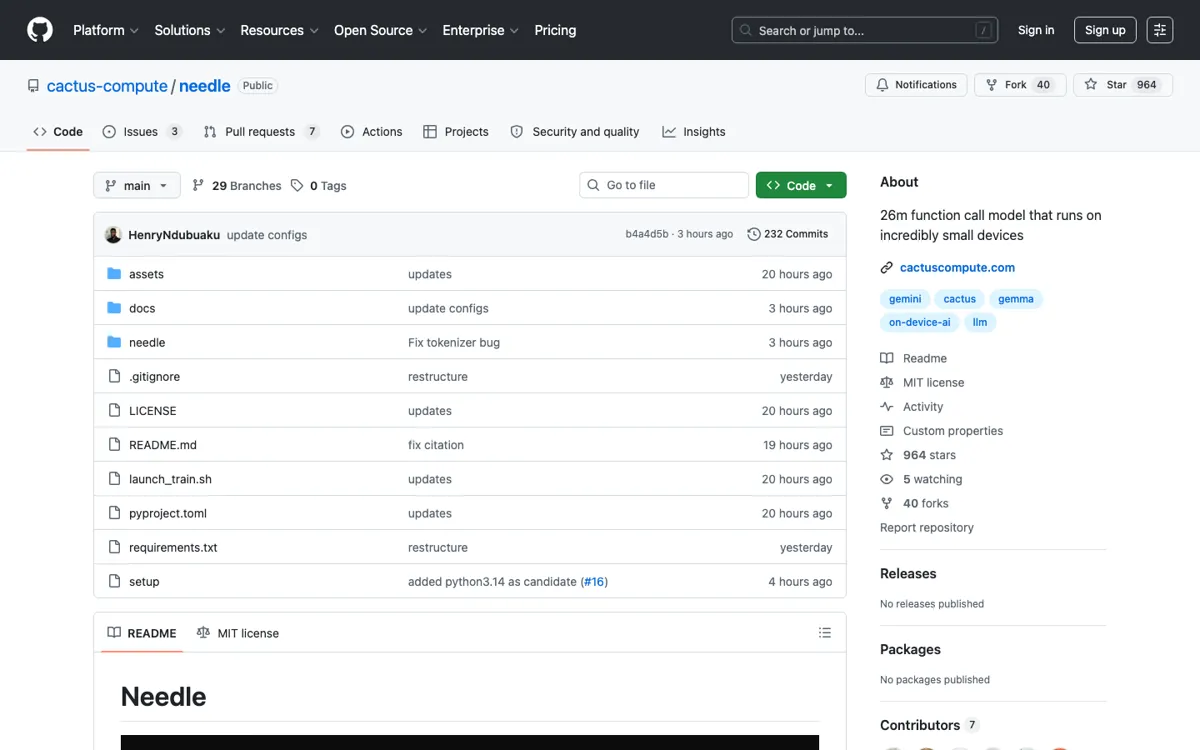

The project also makes it clear that this is a repo-first artifact, not a polished software product. The GitHub surface, README, and architecture notes are the delivery mechanism. That means the value sits with engineers who want to run tests, inspect the implementation, compare behaviors, and maybe borrow ideas or weights for their own systems. It is a stronger fit for model builders, infra teams, and experimental agent developers than for buyers looking for a workflow product. The open repo is a feature here, but it also shifts the burden of evaluation and integration back onto the team using it.