What does Deepdub actually do?

A lot of dubbing tools are optimized for speed-first localization, which works fine until the source material actually carries performance, brand risk, or distribution value. Once the content is a film scene, a streaming catalog, a FAST channel, or a regulated enterprise training library, the buyer is no longer choosing only between faster and slower output. They are choosing between preserving emotion, staying inside compliance rules, using licensed voices, and keeping a review process that will not embarrass the brand later. Deepdub is aimed squarely at that harder layer of the problem, and the official site keeps framing the product around media pipelines, corporate localization, and voice deployment at scale rather than creator convenience alone.

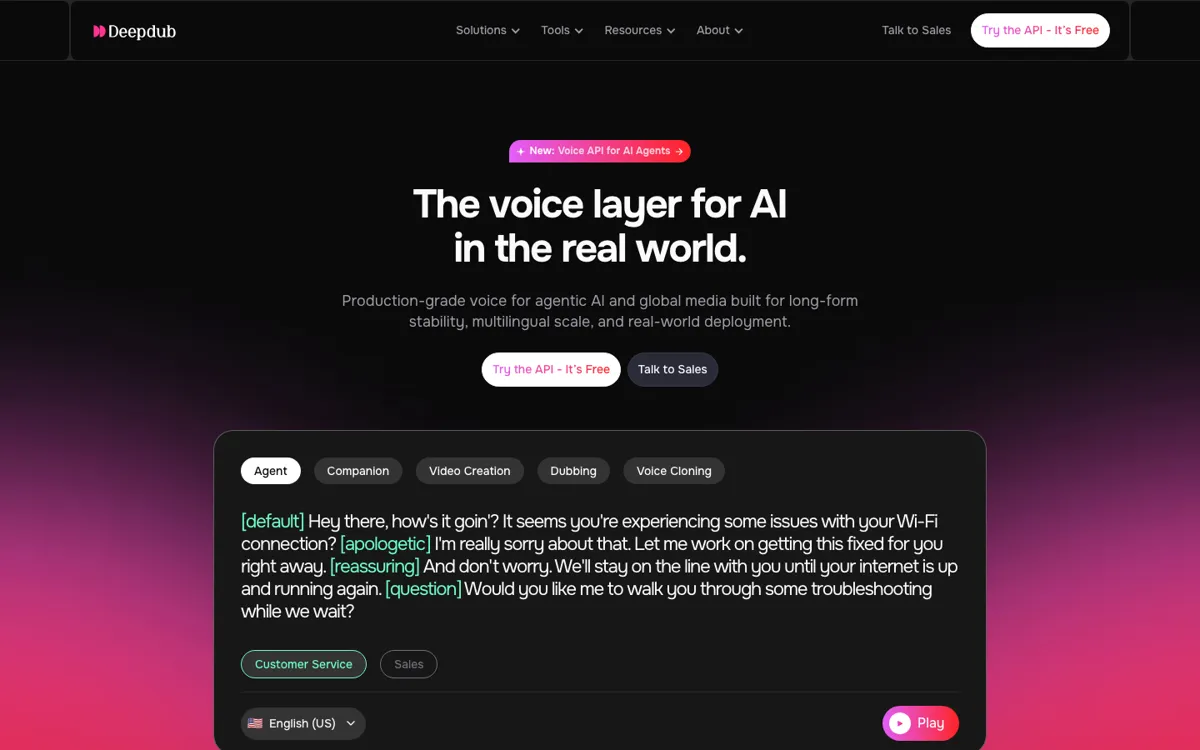

The solution story is broader than one model demo. Deepdub combines emotional text to speech, speech to speech, voice cloning, accent control, a voice library, real-time APIs, managed services, and human-assisted production steps. The docs add concrete developer routes through REST, WebSocket, Python, and JavaScript integration, while the API marketing pages push low latency, commercial licensing, and enterprise support. On the dubbing side, the FAQ keeps reinforcing that this is tied to real post-production and localization work, including native-speaking human adapters and in-house production oversight. That combination makes sense for buyers who need more than a voice sample generator and are trying to operationalize multilingual audio output.