What does LumiChats Offline(free) actually do?

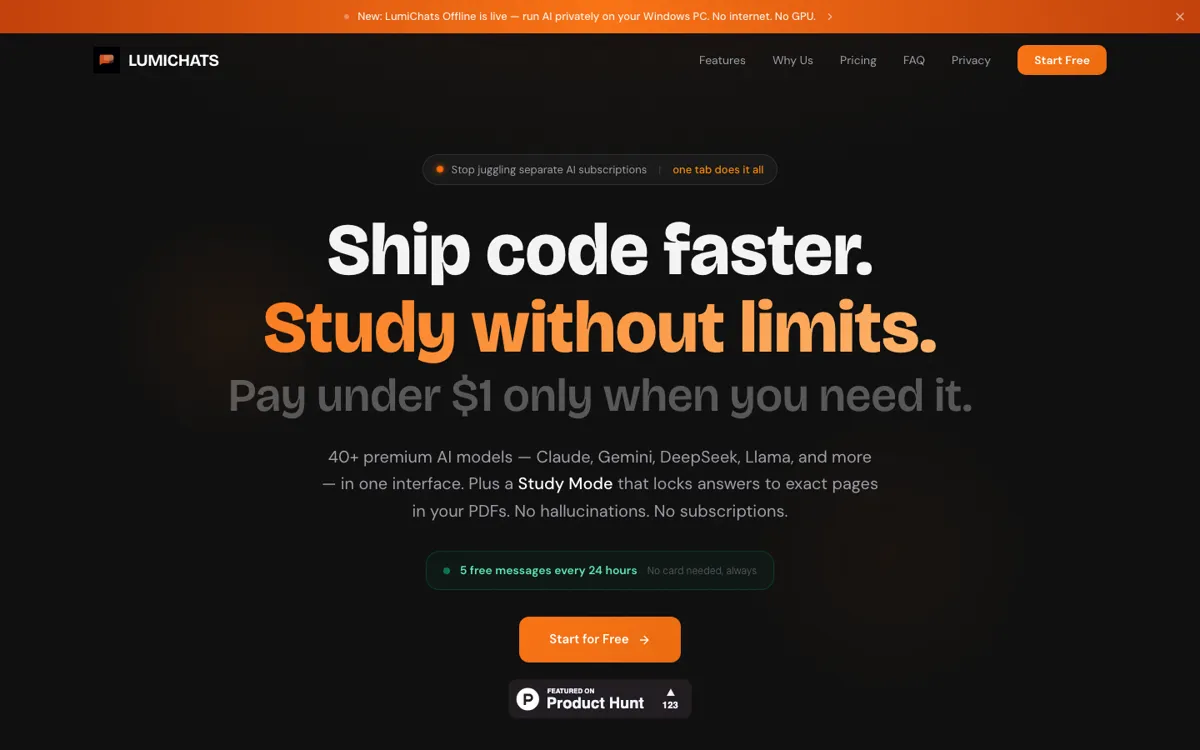

LumiChats Offline(free) is built around a simple tension in the AI market: a lot of people want the convenience of a general-purpose assistant, but do not want their files, chats, and prompts living inside another vendor account. The homepage answers that tension with a blunt promise, use Claude, GPT-5, and Gemini in one place, fully offline, with zero data collection and no monthly fee. The docs broaden that further with support for Ollama, LM Studio, Hugging Face GGUF models, OCR, PDFs, voice tools, coding help, and image understanding. In plain terms, the app is trying to be the local replacement for the usual pile of browser tabs and subscription chat tools.

The strongest part of the pitch is range. LumiChats is not just trying to be an offline chatbot. It wants to be the desktop place where you read documents, ask questions over files, run OCR, test models, do lightweight coding work, and switch between different AI backends without moving your data into the cloud. That matters for users who care more about keeping work on-device than squeezing out the absolute best hosted model experience. A free local app becomes much more interesting when it can cover enough daily tasks that you stop opening three or four separate tools for the same project.