What does Local Deep Research actually do?

Most chat-based AI tools are fine until the question gets long, messy, or citation-sensitive. That is the gap Local Deep Research is trying to fill. The repository does not sell itself like a lightweight writing assistant or a general chatbot. It is framed as an AI-powered research assistant that can search the web, scan academic sources like arXiv and PubMed, look through your private documents, and then synthesize the results into something source-backed. In plain terms, it is for the moment when one model answer is not enough and you need the system to gather evidence before it talks back.

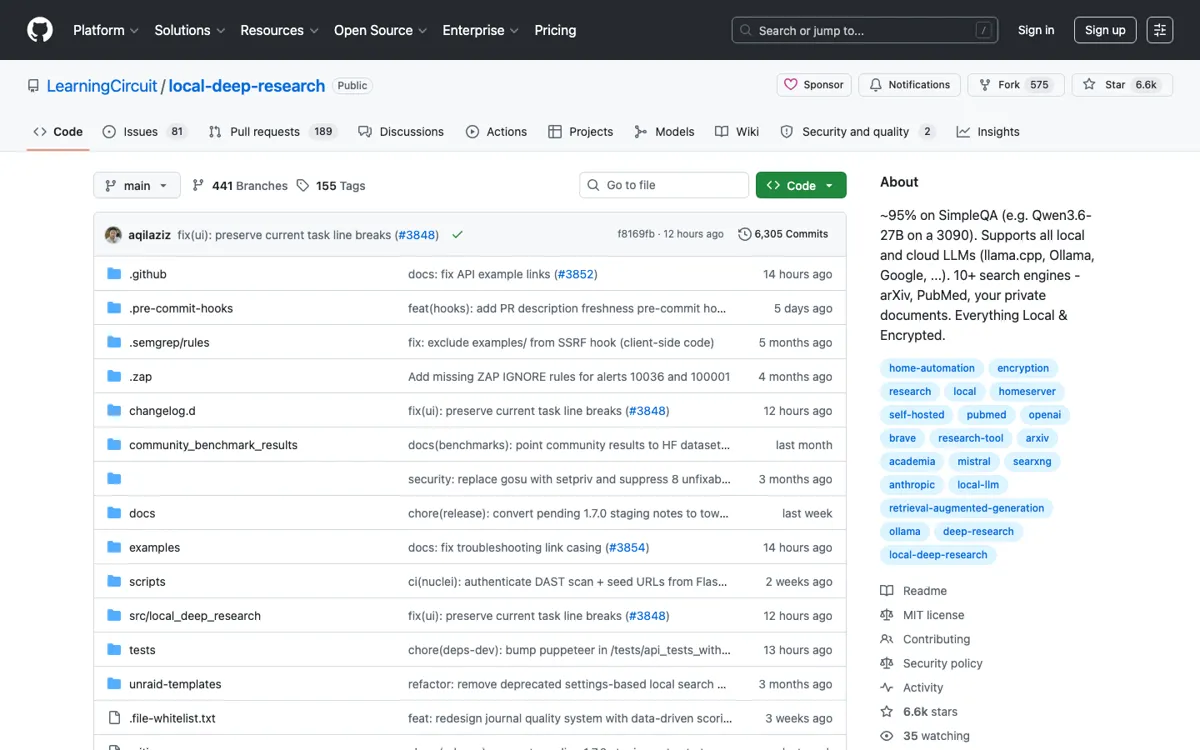

The setup model tells you a lot about who this tool is really for. The project points users toward Docker, pip install, and a local interface on port 5000, which means the product behaves like a self-hosted research workbench rather than a polished website you sign into in 30 seconds. That tradeoff is not incidental. Running locally lets you choose local or cloud models, keep your documents closer to your own environment, and build up a searchable knowledge base from the material you collect. For users who care about privacy, control, or academic-style retrieval, that can be the whole reason to pick it.