What does Adobe Firefly actually do?

A lot of generative AI tools are easy to demo and hard to adopt. They can spit out one image or one clip quickly, but the rest of the job still happens somewhere else. You have to export assets, rebuild them in an editor, explain provenance to a client, and patch together separate tools for image, video, and audio work. That gap matters for actual creative teams, because the bottleneck is rarely one prompt. It is the messy handoff between ideation and production. Adobe Firefly is aimed directly at that problem. The official pages keep tying generation to editing, boards, custom models, and Adobe apps, which shows the product is trying to be part of a broader content pipeline rather than a standalone novelty surface.

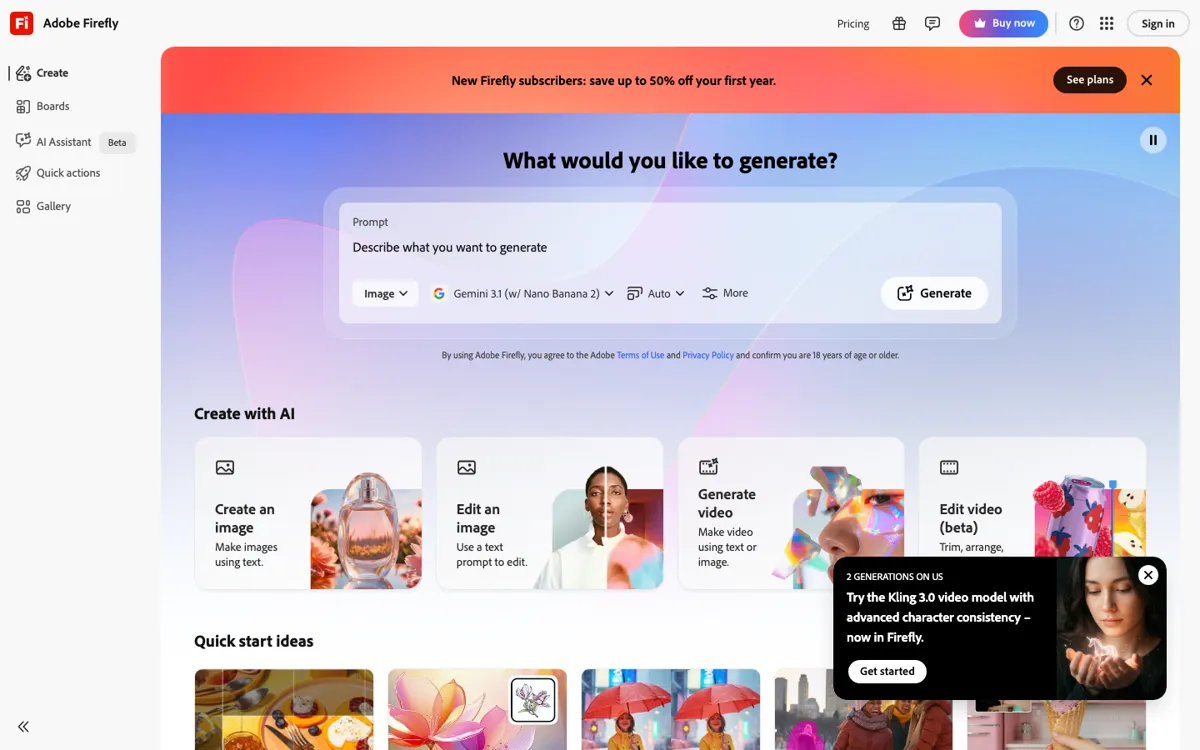

What makes Firefly useful is the way it bundles multiple generative jobs into one production-oriented environment. The product pages show image generation, video generation, sound effect creation, vectors, custom models, and idea boards under the same umbrella, while the broader Adobe site keeps pointing toward Photoshop, Illustrator, and Adobe Express as the next step after generation. That matters if your work starts with a prompt but cannot end there. Instead of generating assets in one product and rebuilding them in another, you can treat Firefly as the front end of a larger creative workflow where outputs stay editable and closer to the rest of the team's toolchain.