What does Veo actually do?

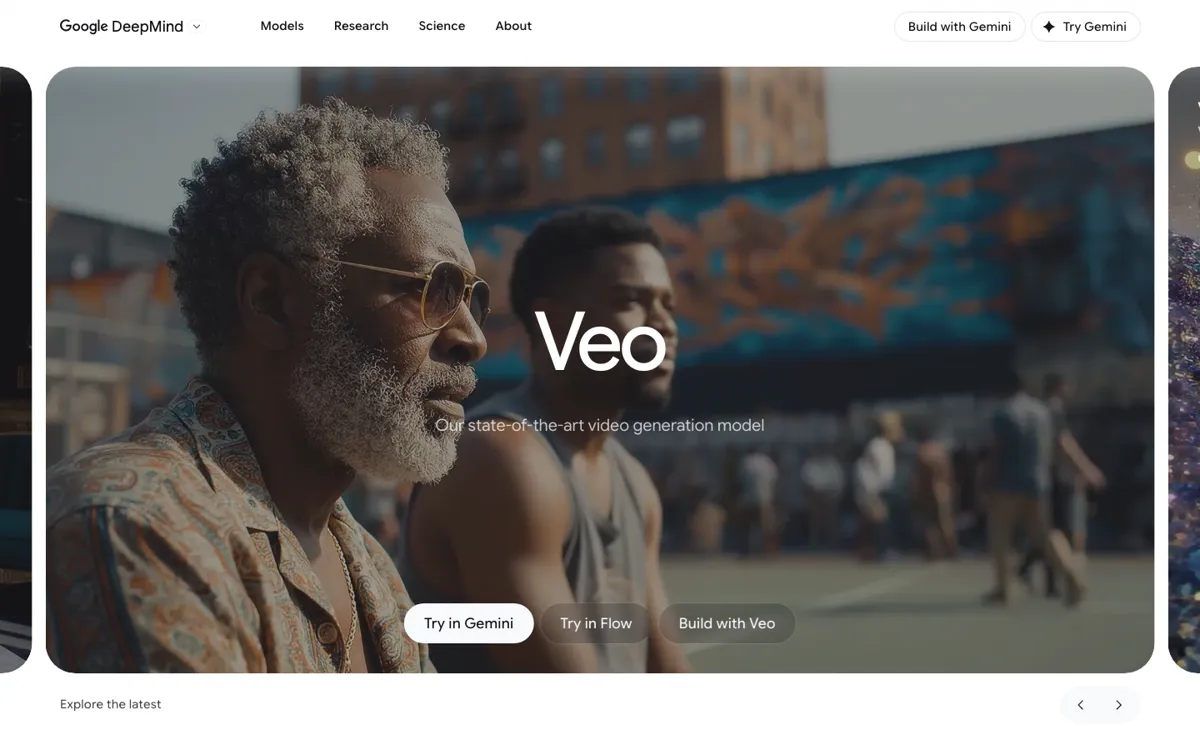

A lot of AI video tools look impressive in a demo and then fall apart the moment you ask for a usable scene with specific framing, believable motion, and sound that does not need to be rebuilt from zero. That gap matters most when you are trying to pitch a concept, mock up an ad, or test a visual sequence before spending real production time. Veo is Google's way of tackling that problem. Instead of stopping at silent visual generation, the official model page and API docs both lean hard into cinematic realism, dialogue, sound effects, and stronger prompt adherence. The practical value is not just prettier output. It is that you can describe a scene more like a shot brief and get back something closer to a scene fragment than a moving wallpaper clip.

What makes Veo more interesting than a standard text to video toy is the amount of control Google is surfacing around it. The Gemini API documentation spells out several concrete levers: portrait or landscape output, extending an already generated clip, specifying first and last frames, and using up to three reference images to guide content. On the Flow side, Google positions it as part of a creator studio with ingredients to video, object insertion and removal, and camera control. That combination matters because it lets Veo cover both ends of the market. A creator can use it to iterate visually inside Flow, while a product team can call the model through an API. You are not locked into one interface, which is useful if your needs change from experimentation to production integration.