What does Luma actually do?

A lot of AI video tools are good at giving you one nice-looking clip and bad at giving you control over why the clip looks right. That becomes a problem the moment you need continuity, keyframed motion, or something that looks like it belongs in a real campaign rather than a one-off demo. Luma is interesting because its public positioning is not only about prompt-to-video. The Ray pages keep coming back to coherent motion, character reference, keyframes, HDR, and draft-to-production movement. In plain terms, it is trying to solve the part where you want a generated shot to behave like a shot, not like a lucky accident that happened once and now cannot be repeated.

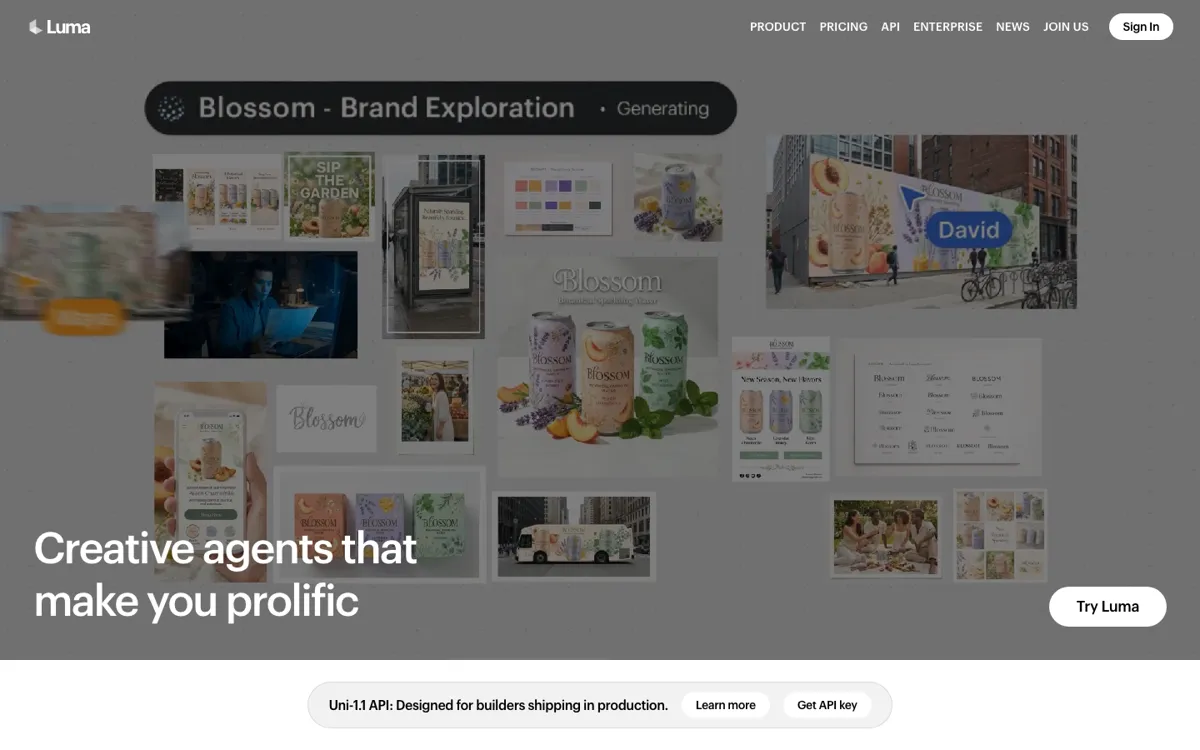

The useful part of the product stack is that Dream Machine is only the visible front door. Behind that, Luma is building a broader platform with Ray video models, API access, and paid tiers that include commercial use and more usage headroom. That matters because some tools are fine for personal experiments but fall apart when you need them to fit a repeatable production rhythm. Luma at least gives you a path forward: start with direct creation, compare models, control motion with references and keyframes, then keep going if the work turns into something a team or product actually depends on.